Memory system for real-time multi-modal AI companions

A memory system in a real-time multimodal assistant helps you interact with ai in a smarter way. This system lets your realtime agent remember details about you, update information, and bring up important facts at the right moment.

You experience personalization, learning, and adaptation, since a memory system stores and retrieves context for each multimodal input.

The memory system works with both short-term and long-term memory for better results.

Year | CAGR (%) | |

|---|---|---|

2025 | 2 | 25 |

2033 | 10 |

New products like MemU show how fast multimodal technology for ai companions is growing.

Key Takeaways

A memory system in AI companions enhances personalization by remembering your preferences and habits, making interactions feel more tailored.

Multimodal AI agents can process various types of data, such as text, images, and audio, allowing for smarter and more accurate responses.

Real-time data capture means the AI automatically remembers important details from your interactions, reducing the need for you to repeat information.

Context awareness enables the AI to connect past conversations and provide relevant answers, improving the overall user experience.

Privacy features, like encryption and data management, ensure your personal information remains secure while using AI companions.

Memory System Architecture

Components

When you use a real-time multimodal ai companion, you interact with a complex memory system. This system has several important parts that work together to help the ai remember and use information. Each part stores a different type of memory, making the ai smarter and more helpful in daily life.

Description | |

|---|---|

Core Memory | Stores high-priority, persistent information about you and the ai agent's identity. |

Episodic Memory | Captures time-stamped events and interactions, helping the ai understand your routines. |

Semantic Memory | Maintains abstract knowledge and facts, not tied to specific times or events. |

Procedural Memory | Stores step-by-step guides and processes for helping you with tasks. |

Resource Memory | Supports hybrid on-device/cloud memory for efficient storage and retrieval. |

Knowledge Vault | Holds large-scale memories, letting the ai access lots of data when needed. |

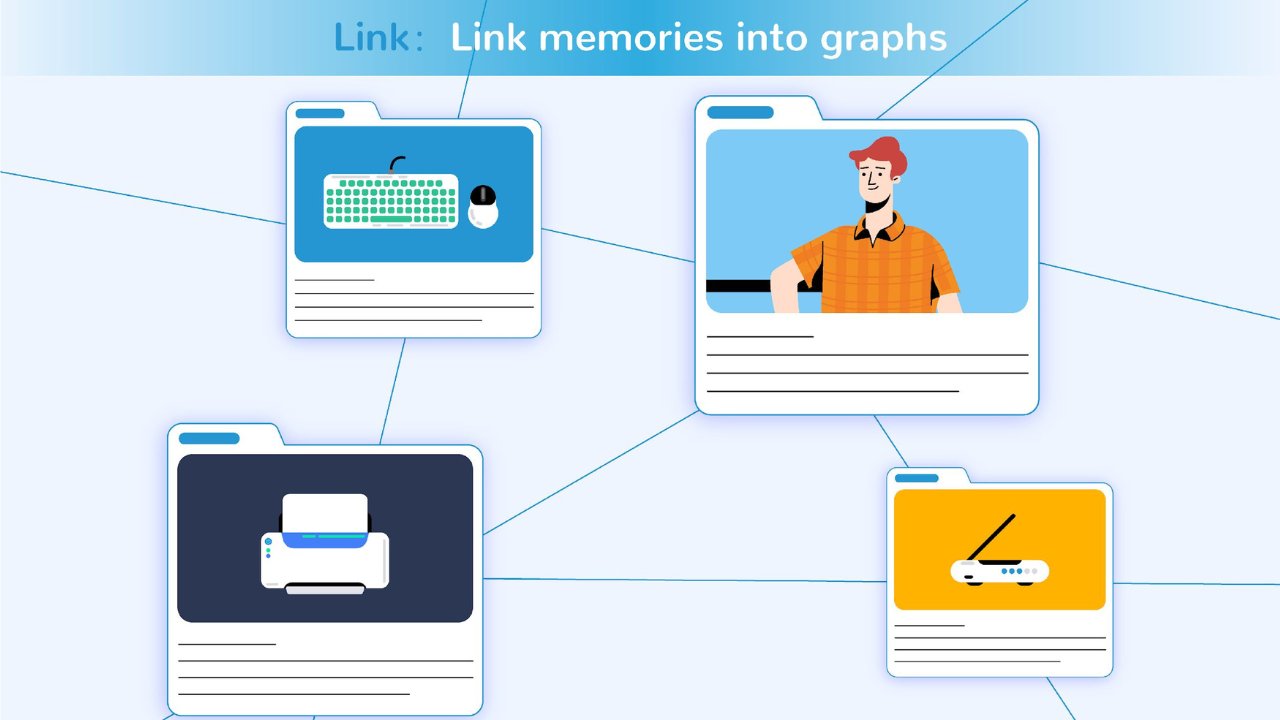

You see these components in action in advanced multimodal systems like MemU. MemU stands out as a pioneering real-time multimodal memory system for ai companions. It uses memory graphs to connect different memories, making it easy for the ai to recall information. The system also uses intelligent folders, managed by a memory agent, to decide what to record or archive. This structure turns memory discovery into effortless recall, not just a search.

Other frameworks, such as M3-Agent and Kruel.ai, also use similar multimodal models. They focus on fusion of different memory types and use memory graphs to improve reasoning and explanation. These systems show how fusion and sensor fusion help ai companions understand complex situations.

Data Capture

Multimodal ai agents need to capture data from many sources. You might speak, type, show an image, or even use gestures. The memory system must handle all these inputs in real-time. Multimodal systems use several techniques to capture and process this data.

Description | |

|---|---|

Continuous Tracking | Monitors your non-verbal and verbal signals to measure participation and internal states. |

Real-time Indicators | Captures your immediate reactions or feelings at specific times. |

Multimodal Integration | Combines text, audio, and video to improve understanding and interaction. |

You benefit from data fusion in these systems. For example, MemU automatically captures memories during your conversations. The ai agent decides what information to keep, update, or archive. This automatic capture means you do not have to enter every detail yourself. The system uses multimodal models to process text, images, audio, and even sensor data. This fusion of information helps the ai understand your needs better.

Multimodal systems also use sensor fusion to combine signals from different sources. For example, the ai might use physiological signals, like heart rate, to understand your mood. This real-time processing lets the ai respond quickly and accurately.

Storage and Retrieval

After capturing data, the memory system must store and retrieve it efficiently. Multimodal ai companions use advanced storage methods to keep information organized and easy to access. You see this in MemU, where memories are organized into files or profiles. For example, a "profile" file might store your name or preferences. This organization helps the ai adjust its responses if it misunderstands something or needs to update its knowledge.

Tip: Organizing memories into files or profiles helps the ai develop social skills, like understanding your feelings or correcting mistakes.

Multimodal models use semantic memory storage to understand the meaning and context of your data. The ai can retrieve information even if you do not use the exact words. This feature makes conversations smoother and more natural. The system uses scalable architecture to handle large amounts of data without slowing down.

You also benefit from simple integration with modern ai applications. Memory management functions, such as addMemory, searchMemories, and formatMemoriesForAI, help the system handle memories effectively. Multimodal systems use benchmarks like OK-VQA, WebQA, and COCO Captions to test how well they store and retrieve information. These benchmarks check if the ai can answer questions, match images to text, and combine information from different sources.

Fusion plays a key role in storage and retrieval. By combining information from different modalities, the ai can give you better answers and support. Multimodal systems rely on fusion to connect memories, process data, and improve user experience.

Multimodal AI Agents

Modalities

When you interact with multimodal ai agents, you use more than just words. These agents can understand text, images, audio, video, and even sensor data. You might type a message, show a photo, or speak a command. The ai listens, looks, and reads all at once. This ability comes from combining natural language processing, computer vision, and speech recognition. You get a smarter experience because the ai can switch between these types of input easily.

Here are some common modalities that multimodal systems handle:

Text (natural language)

Images (visual recognition)

Audio (speech and sound)

Video (motion and action)

Sensor data (like GPS or temperature)

Unlike older ai models that only use one type of data, multimodal ai agents can process many types at the same time. This helps the ai understand your context better. For example, if you send a photo and a voice note, the ai can analyze both and give you a more accurate answer.

Integration

Multimodal systems do more than just collect different types of data. They combine and align this information to create a complete picture. You benefit from this integration because the ai can connect your words, tone, and facial expressions. The system uses an Input Module to handle data, a Fusion Module to combine it, and an Output Module to generate results. This setup lets the ai respond in a way that feels natural and smart.

Note: Multimodal integration mimics how people use their senses together. You see, hear, and feel at the same time, and so does the ai.

Let’s look at how MemU and MemPal use these ideas. MemU captures your conversations, images, and actions, then links them in a memory graph. MemPal also brings together text, audio, and visual data for a seamless user experience. Both systems use real-time processing to keep up with your needs.

Here is a table showing some challenges in integrating modalities:

Challenge | Description |

|---|---|

Data Integration Complexity | Merging different data sources needs advanced architectures and lots of training data. |

Real-Time Processing Demands | Multimodal systems must process and sync inputs quickly for smooth interactions. |

Contextual Understanding | Teaching ai to read meaning across modalities takes advanced reasoning and smart design. |

Multimodal ai agents give you a richer, more helpful experience by combining all these elements. You get answers that make sense because the ai understands your world from many angles.

AI User Experience

Personalization

You want your ai companion to feel like a true personal assistant. Memory systems make this possible by helping the ai remember your preferences, habits, and even your mood. When you chat, the ai uses short-term memory to keep track of your current conversation. It uses medium-term memory to remember what you talked about earlier in the day. Long-term memory helps the ai remember your favorite music, your birthday, or how you like your coffee. This layered memory structure gives you a more human-like experience.

Memory systems let the ai remember your interactions and preferences.

The ai can track your emotional state and adjust its responses.

You get suggestions that match your changing interests.

The ai can help manage your schedule and remind you of important tasks.

For example, if you mention a favorite song during a speech, the ai can suggest similar music next time. If you often ask about the weather before leaving home, the ai will learn to give you weather updates automatically. These features make your daily life smoother and more enjoyable.

Tip: MemU uses automatic memory capture, so you do not have to repeat yourself. The ai remembers details like your name or favorite color and brings them up at the right time.

Personalization also means you have control over your data. Many systems let you choose what the ai remembers. You can see transparency reports that show how your data is used. Feedback tools help you tell the ai what works and what does not. These features build trust and make your ai companion even better.

Context Awareness

Context awareness means your ai does not just respond to single questions. It understands the bigger picture. When you talk to the ai, it remembers past conversations and uses that information to give better answers. For example, if you ask about a bill, the ai can recall your last payment and help you faster. If you mention a problem with your device, the ai can check your history and offer the right solution.

Memory systems help the ai keep track of your communication patterns.

The ai uses persistent memory to connect your actions across different apps and devices.

You get responses that fit your personal history, not just generic answers.

Imagine you use speech to ask about your schedule. The ai remembers your past meetings and suggests the best time for a new event. If you change your plans, the ai updates its memory and keeps everything organized. This context-aware approach helps you complete tasks more easily and makes the ai feel like a real partner.

Note: MemU’s memory activation feature brings back old memories when you need them. If you mention a topic you discussed weeks ago, the ai can recall it instantly and continue the conversation without missing a beat.

Context-aware systems also help companies stand out. When ai companions keep context across workflows, you finish tasks faster and with less effort. You feel understood and supported, which makes you want to use the ai more often.

Feature | How It Improves User Experience |

|---|---|

Persistent Memory | Keeps context across sessions |

Real-Time Speech Input | Responds quickly to spoken commands |

Multimodal Data Fusion | Understands text, images, and speech |

Automatic Memory Recall | Brings up relevant info when needed |

With real-time multimodal ai, you get a smarter, more helpful companion. The ai listens to your speech, watches for visual cues, and reads your data to give you the best possible support. These systems make your user experience richer and more personal every day.

Real-Time Multimodal Apps: Challenges

Latency

When you use real-time multimodal apps, you expect quick responses. Latency means the delay between your input and the ai’s reply. Several factors can slow down real-time processing:

Model complexity increases inference time.

Hardware limits, such as slow CPUs or GPUs, can cause delays.

Data input and output overhead, especially with images or audio, adds extra time.

Network issues in distributed systems create communication delays.

Resource scheduling and queuing can make you wait longer.

Processing video streams with large models, like CLIP, often challenges devices with limited power. Synchronizing multimodal data, such as matching speech with visuals, can also lead to mismatches if not managed well. Benchmarks like HFTBench and StreetFighter help measure how well ai balances latency and accuracy in fast-paced tasks.

Accuracy

You want your ai to give you the right answers every time. Multimodal systems must combine information from text, images, and audio to improve accuracy. Studies show that memory systems like MemPal help users, especially older adults, find information faster and with fewer mistakes. When the ai uses voice and visual cues together, you spend less time searching and feel more confident in the results.

Efficient memory management is key for long sequences. Techniques like PagedAttention let the ai focus on important information while storing less critical data for later. Systems like Mem0 use smart retrieval to avoid repeating or missing facts. These methods help the ai maintain high accuracy, even with lots of data and complex processing.

Privacy

Privacy matters when you trust ai with your personal data. Multimodal memory systems must follow strict rules to keep your information safe. Here is a table showing important privacy aspects:

Aspect | Description |

|---|---|

GDPR Compliance | Requires lawful, clear, and secure handling of your data. |

Data Management | Uses encryption, access controls, and ways to delete your information. |

Human Oversight | Lets you challenge automated decisions and manage your own data. |

Ai can sometimes learn sensitive details by connecting different pieces of information. Even if data is anonymous, the risk of revealing private facts remains. Designers must balance powerful ai features with strong privacy protections to keep you safe.

You see how memory systems shape your experience with real-time multimodal ai companions. These systems help ai remember your preferences and respond in smarter ways. High engagement shows their value:

Metric | Engagement (Sessions/Day) | Duration (Minutes) | |

|---|---|---|---|

Next-day retention | 50-60 | 100-250 | 100-120 |

7-day retention | 30 | N/A | N/A |

30-day retention | 13-18 | N/A | N/A |

You benefit from ongoing improvements in multimodal ai. Look for these future directions:

Robotics integration

Predictive analytics for personalized care

Understanding context, emotional state, and intention

Emerging products like MemU lead the way, making your multimodal ai experience more personal and effective.

FAQ

What is a multimodal memory system in AI companions?

A multimodal memory system lets your AI remember information from text, images, audio, and more. You get smarter responses because the AI connects different types of data.

How does MemU capture my information automatically?

MemU watches your interactions and saves important details without asking you to enter them. You do not need to type or upload anything. The system works in the background.

Tip: You can review what MemU remembers by checking your profile files.

Can I control what my AI companion remembers?

Yes, you can manage your data. You choose which memories to keep or delete. Many systems show you reports so you know how your information is used.

Why does real-time processing matter for AI companions?

Real-time processing helps your AI respond quickly. You get answers right away, even when you use speech, images, or video. Fast replies make your experience smoother.

Is my personal data safe with multimodal AI memory systems?

Most systems use encryption and strict rules to protect your data. You can see privacy settings and decide who can access your information.

Privacy Feature | What It Does |

|---|---|

Encryption | Keeps your data safe |

Access Control | Limits who sees info |

Data Deletion | Lets you erase data |

See Also

Exploring The Functionality Of AI Agents And Their Roles

Enhance Your AI's Intelligence Quickly With GPT-5 Prompt Tool

Jule: The AI Tool Streamlining Software Development Processes

Tips For Crafting Effective Prompts For Nano Banana

Educational Programs Offered By HuggingFace For AI Enthusiasts