GLM-4.6 Language Model Ushers in Enhanced Reasoning and Coding Abilities

You gain more power and flexibility with the GLM-4.6 language model. This upgrade brings advanced reasoning, improved coding skills, and stronger agentic abilities. Developers notice a larger context window, now supporting up to 200K tokens. You benefit from a more refined writing style and faster, more efficient task completion. Many users praise its cost-effectiveness and versatility for both coding and creative writing. See how the latest version compares:

Feature | GLM-4.5 | GLM-4.6 |

|---|---|---|

Context Window | 128K tokens | 200K tokens |

Coding Performance | Lower scores | Higher scores |

Reasoning | Basic | Advanced |

Key Takeaways

GLM-4.6 offers a larger context window of 200K tokens, allowing you to handle bigger documents and complex data without splitting tasks.

The model shows improved coding and reasoning skills, achieving higher benchmark scores and providing more accurate results for real-world tasks.

You can save tokens with GLM-4.6, using about 30% fewer tokens than previous versions, which enhances efficiency in processing tasks.

GLM-4.6 supports multimodal tasks, meaning it can work with both text and visuals, making it versatile for various applications.

The model's refined writing style makes interactions feel more natural, improving communication for coding, creative writing, and teamwork.

GLM-4.6 Language Model Advancements

Expanded Context Window

You can now work with much larger documents and more complex data using the GLM-4.6 language model. The context window has grown from 128K tokens in GLM-4.5 to 200K tokens in GLM-4.6. This means you can process longer texts, analyze bigger datasets, and keep more information in memory at once.

The expanded context window lets you handle large documents and complex workflows.

You get better comprehension and more consistent reasoning over long inputs.

Tasks like document summarization and legal analysis become easier and more accurate.

This upgrade helps you manage projects that need a lot of information at once. You do not have to split your work into smaller parts as often.

Coding and Reasoning Upgrades

The GLM-4.6 language model brings stronger coding and reasoning skills. You will notice higher benchmark scores and better performance in real-world tasks. The model can solve harder problems and write more accurate code.

Feature | GLM-4.5 | GLM-4.6 | Improvement |

|---|---|---|---|

Inferential Performance | Moderate | High | Significant improvement |

Tool Use During Inference | Limited | Enhanced | Better support |

Integration into Agent Frameworks | Basic | Improved | More effective integration |

Benchmark Performance | Moderate | High | Outperforms GLM-4.5 |

Token Efficiency | N/A | 15% better | Improved efficiency |

You can use the model for tasks like building websites or solving coding challenges. It generates content that matches your needs and often uses fewer tokens to finish the job. In tests, the model created a travel website with strong design and relevant content. You get more reliable results and save time on complex projects.

Agentic Capabilities

You gain new agentic abilities with the GLM-4.6 language model. The model can now use tools, process images, and handle documents with both text and visuals. It understands layouts, tables, and figures together, so your outputs stay clear and organized.

Feature | Description |

|---|---|

Native Multimodal Function Calling | Directly inputs images, screenshots, or PDFs for a full loop of perception and execution. |

Interleaved Multimodal Content | Combines text and visuals in documents for better coherence and usability. |

Improved Long-Document Understanding | Processes layout, tables, and figures together, keeping meaning across different formats. |

You can see these upgrades in real-world tasks. For example, the model achieved a 48.6% win-rate against Claude Sonnet 4 on coding tasks, while using about 15% fewer tokens than before. In one case, the model planned and executed Python function calls to retrieve stock prices, showing it can work with external tools on its own. These features help you automate more tasks and get better results with less effort.

Writing Style Improvements

You will notice that the GLM-4.6 language model writes in a way that feels more natural and human. The model avoids awkward or robotic phrasing. It performs well in creative writing and role-playing tasks, making your interactions smoother and more enjoyable.

GLM-4.6 is recognized for its refined writing style that aligns closely with human expression. It is less robotic and more natural compared to earlier models.

Users have given positive feedback on the model’s ability to write clearly and creatively. It now ranks at the top for creative writing among open models. You can trust it to communicate your ideas effectively, whether you are coding, writing stories, or working in a team.

Performance and Evaluation

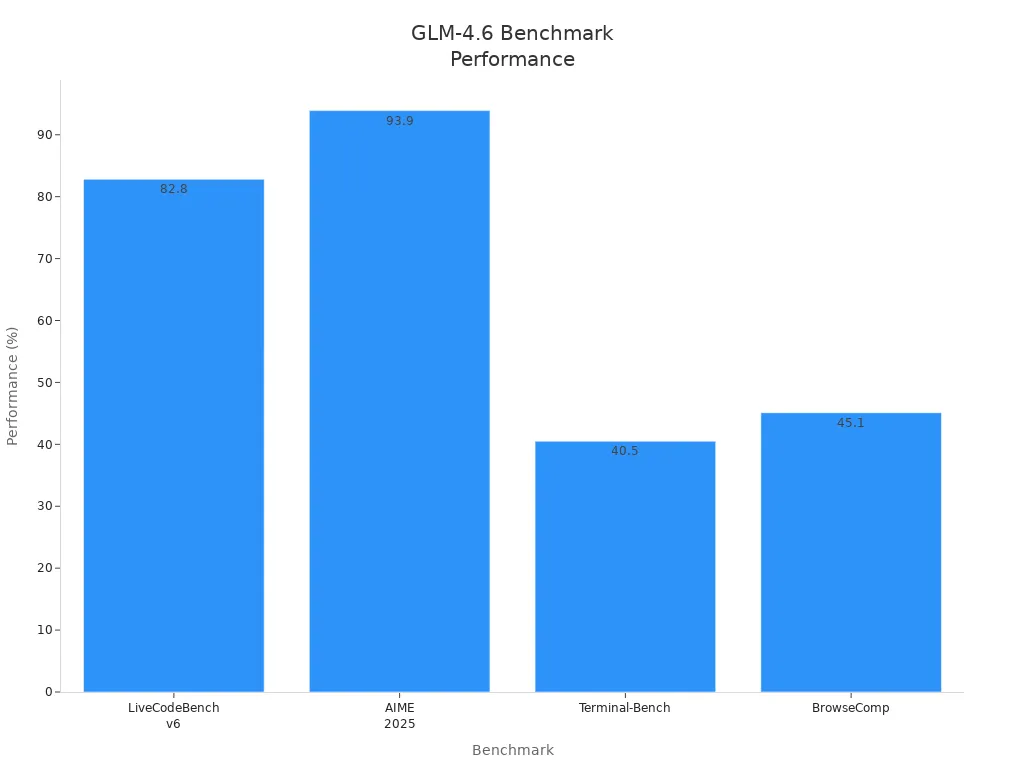

Benchmark Results

You can see how the GLM-4.6 language model performs on standard benchmarks. It leads in many areas, especially in coding and safety. The table below shows its scores on several well-known tests:

Benchmark | GLM-4.6 Performance | Notes |

|---|---|---|

LiveCodeBench v6 | #1, 82.8% | Dominates in practical coding tasks |

HLE | #1 | Leading performance in safety handling |

AIME 2025 | #3, 93.9% | Strong performance in reasoning tasks |

Terminal-Bench | #3, 40.5% | Competitive in multi-turn evaluations |

BrowseComp | #4, 45.1% | Significant improvement over predecessor |

GPQA | #15 | Gaps in graduate-level reasoning |

You can also view the results in the chart below:

These results show that you get strong coding and reasoning abilities, with some room for growth in advanced academic tasks.

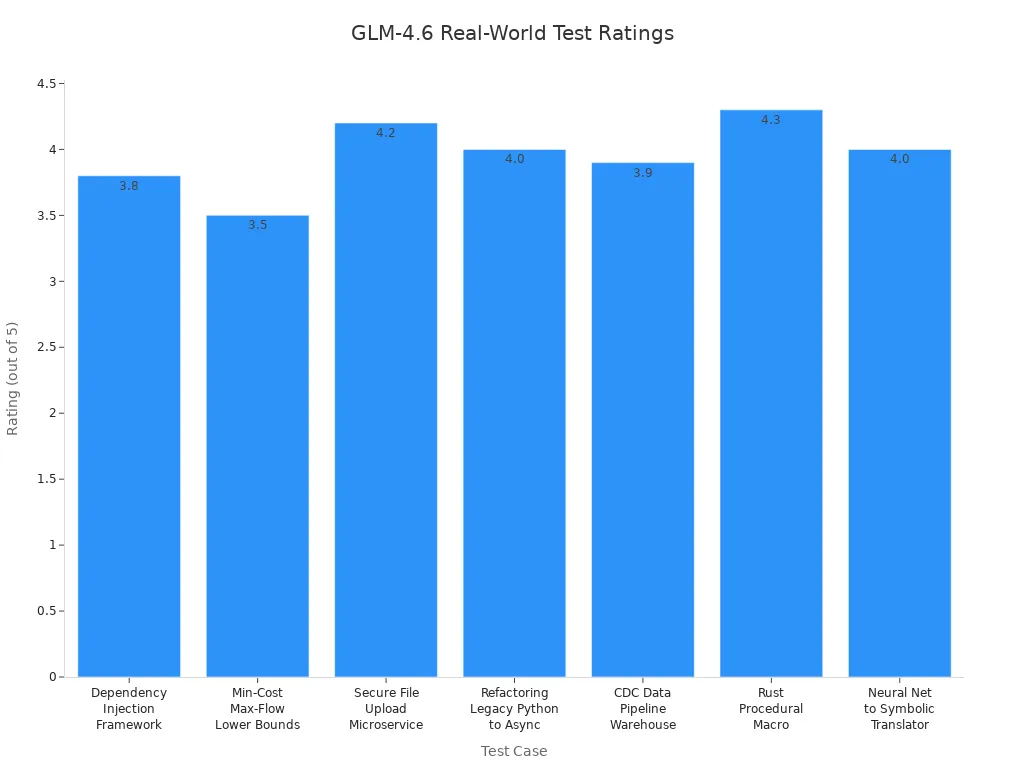

Real-World Testing

You benefit from real-world tests that measure how the model works in practice. Reviewers have tested it on tasks like building frameworks, optimizing algorithms, and handling secure uploads. The table below summarizes these tests:

Test Case | Use Case | Reviewer Feedback | Rating |

|---|---|---|---|

Dependency Injection Framework in Python | Framework design and type management | Created a working DI container, missed some features | 3.8 / 5 |

Min-Cost Max-Flow (C++) | Graph optimization | Partial implementation, good theory | 3.5 / 5 |

Secure File Upload Microservice in Go | Secure backend design | Well-structured, met requirements | 4.2 / 5 |

Refactoring Legacy Python Code to Async | Async migration | Efficient code, solid reasoning | 4.0 / 5 |

CDC Data Pipeline to Analytics Warehouse | Data engineering | Robust pipeline, good error handling | 3.9 / 5 |

Rust Procedural Macro for Query Builders | Compile-time code generation | Strong syntax reasoning | 4.3 / 5 |

Neural Network → Symbolic Expression Translator | ML introspection | Clear workflow, good metrics | 4.0 / 5 |

You can rely on the model for engineering, software, and data projects. Its large context window helps you manage complex tasks.

Model Comparisons

You want to know how the model stacks up against others. The table below compares key metrics:

Metric | GLM-4.6 | Claude Sonnet 4 |

|---|---|---|

Parameters | 357B | N/A |

Context Window | 200K | N/A |

Token Efficiency | 30% improved | N/A |

Win Rate (CC-Bench) | 48.6% | N/A |

Performance Benchmarks | Competitive across 8 benchmarks | N/A |

GLM-4.6 language model matches top models like Claude Sonnet 4 in many benchmarks.

You get better token efficiency and strong results in coding and translation.

In coding challenges, it completes tasks with detailed outputs, sometimes needing extra prompts but providing more context.

You can trust this model for both speed and accuracy in real-world applications.

GLM-4.6 Language Model Efficiency

Token Usage

You save tokens when you use the GLM-4.6 language model. The model uses about 30% fewer tokens than older versions. This means you can process longer texts and complete more tasks without running out of space. The context window now supports up to 200K tokens, so you can work with bigger documents.

You can make your tasks even more efficient by following these tips:

Break tasks into smaller steps. This helps the model give cleaner results.

Disable unnecessary reasoning for simple tasks. You can use the parameter

disable_reasoning: Trueto speed up answers.Set a limit for completion tokens. This keeps responses short and focused.

Use structured output formats like JSON or lists. These formats help the model avoid extra words.

You get more value from each token. Cleaner outputs mean less waste and faster results.

Here is a table showing how GLM-4.6 performs on popular benchmarks compared to GLM-4.5:

Benchmark | GLM-4.6 Performance | GLM-4.5 Performance | Notes |

|---|---|---|---|

SWE-bench | Top 10 | N/A | Lags 10 points behind internal results |

Terminal-Bench | Top 10 | N/A | Reproduces internal results |

LiveCodeBench | Top 10 | N/A | Reproduces internal results |

Finance Industry | Underperforms | N/A | Struggles compared to GLM-4.5 |

You see that GLM-4.6 ranks high in most areas. The model uses fewer tokens for each task, which helps you work faster and smarter.

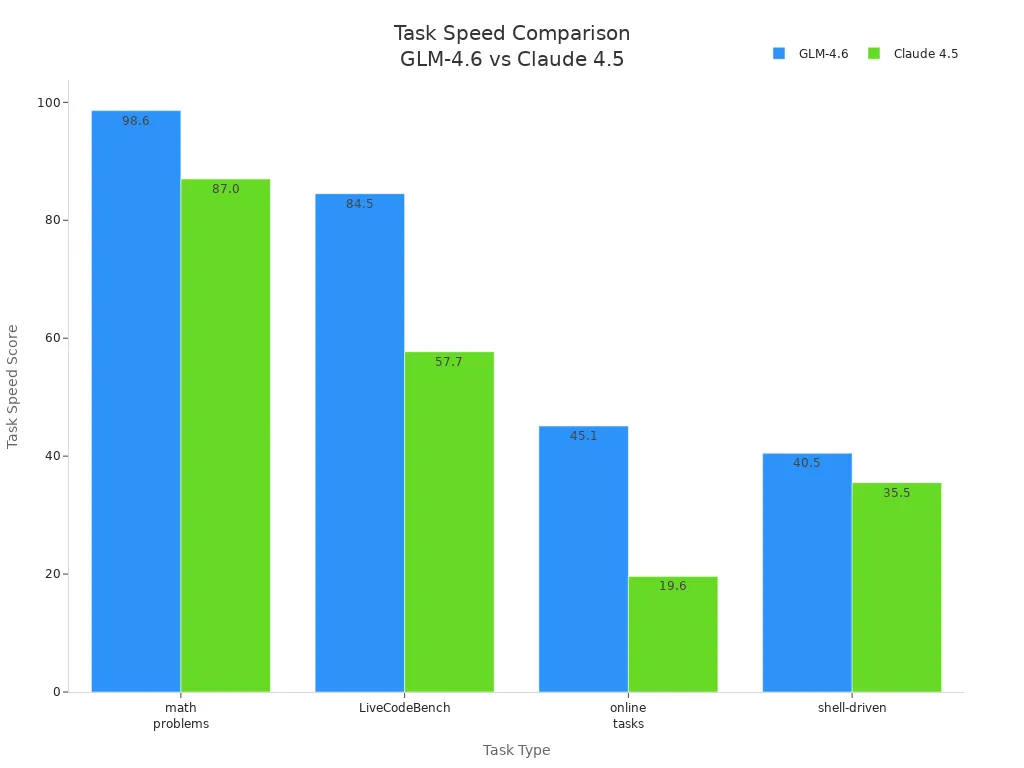

Task Speed

You finish tasks quickly with GLM-4.6. The model scores higher than Claude 4.5 in many speed tests. You get answers faster, especially for math problems, coding, and online tasks.

Model | Task Speed (Score) |

|---|---|

GLM-4.6 | 98.6 (math problems) |

Claude 4.5 | 87.0 |

GLM-4.6 | 84.5 (LiveCodeBench) |

Claude 4.5 | 57.7 |

GLM-4.6 | 45.1 (online tasks) |

Claude 4.5 | 19.6 |

GLM-4.6 | 40.5 (shell-driven) |

Claude 4.5 | 35.5 |

You notice the difference in speed when you run coding or math tasks. The model completes jobs faster, so you spend less time waiting. This helps you stay productive and get more done each day.

Access and Deployment

API Integration

You can connect to the GLM-4.6 language model using an API. This process works in many environments and helps you start quickly. Here are the steps you need to follow:

Sign up at the developer portal and get your API key.

Use the endpoint

https://api.z.ai/api/paas/v4/chat/completionswith the POST method.Add authentication headers:

Authorization: Bearer <your-api-key>Content-Type: application/json

Build your request payload. Set

"model": "glm-4.6v"in the payload.You can turn on thinking steps or streaming mode if you want more control.

Make the API call in your favorite environment, such as Python.

For local access, download the model weights and use a compatible framework.

You can use different tools to connect, such as:

cURL

These options let you work with the model in the way that fits your workflow.

Coding Agents and Chat

You can use GLM-4.6 to power coding agents and chatbots. These agents help you write code, answer questions, and automate tasks. You can set up chat interfaces that use the model for real-time help. Many developers use the API to build coding assistants that understand project context and generate code that matches your needs.

GLM-4.6 also supports agents that plan trips, manage reservations, and handle complex workflows. In companies, you can use the model to search large document archives and get answers from many sources at once. Data scientists use it to find patterns in big log files or summarize long reports.

Tip: You can build chatbots and coding agents on platforms that support API calls, making it easy to add smart features to your apps.

Local Deployment

You can run GLM-4.6 on your own hardware for privacy and speed. This works well for custom workflows, legal assistants, or on-premise data processing. Here are the hardware requirements:

Hardware Component | Description |

|---|---|

Lets you deploy GLM-4.6 for advanced applications and heavy workloads | |

Local Supercomputers | Gives you fast, private processing for sensitive or large-scale projects |

Many organizations use local deployment to power autonomous agents, manage knowledge, and support software development. You can use the model to generate code, analyze data, or answer questions—all on your own systems.

You now have a powerful tool with GLM-4.6. This model helps you solve problems, write code, and manage large projects with ease. You get faster results and use fewer tokens. To start using GLM-4.6, follow these steps:

Sign up for an account on the Zhipu AI website.

Verify your email or phone number.

Subscribe to the GLM Coding Plan.

Access the API dashboard and create your API key.

Set up your development environment and test the API.

Start building smarter solutions today. GLM-4.6 gives you the edge you need.

FAQ

What makes GLM-4.6 better than GLM-4.5?

You get a bigger context window, stronger coding skills, and faster task speed. The model uses fewer tokens and writes more naturally. You can handle larger projects and get more accurate results.

Can I use GLM-4.6 for both coding and creative writing?

Yes! You can use GLM-4.6 for coding, creative writing, and data analysis. The model adapts to your needs and gives you clear, human-like responses.

How do I access GLM-4.6 for my projects?

You sign up for an account, get your API key, and connect using the provided endpoint. You can use Python, Node.js, or cURL to start building with the model.

What hardware do I need for local deployment?

You need strong hardware, like 8xH200 GPUs or a local supercomputer. This setup lets you run GLM-4.6 privately and process large workloads.

Does GLM-4.6 support multimodal tasks?

Yes! You can input images, screenshots, or PDFs. The model understands both text and visuals, so you can work with many types of data in one place.

See Also

Enhance Your AI's Intelligence Quickly With GPT-5 Optimizer

NotebookLM Update Revolutionizes Learning For Students And Professionals

Understanding The Reasons Behind Language Model Hallucinations

Jule: The AI Tool Simplifying Software Development Processes

OpenAI Speeds Up GPT-5.2 Release To Compete With Google Gemini 3